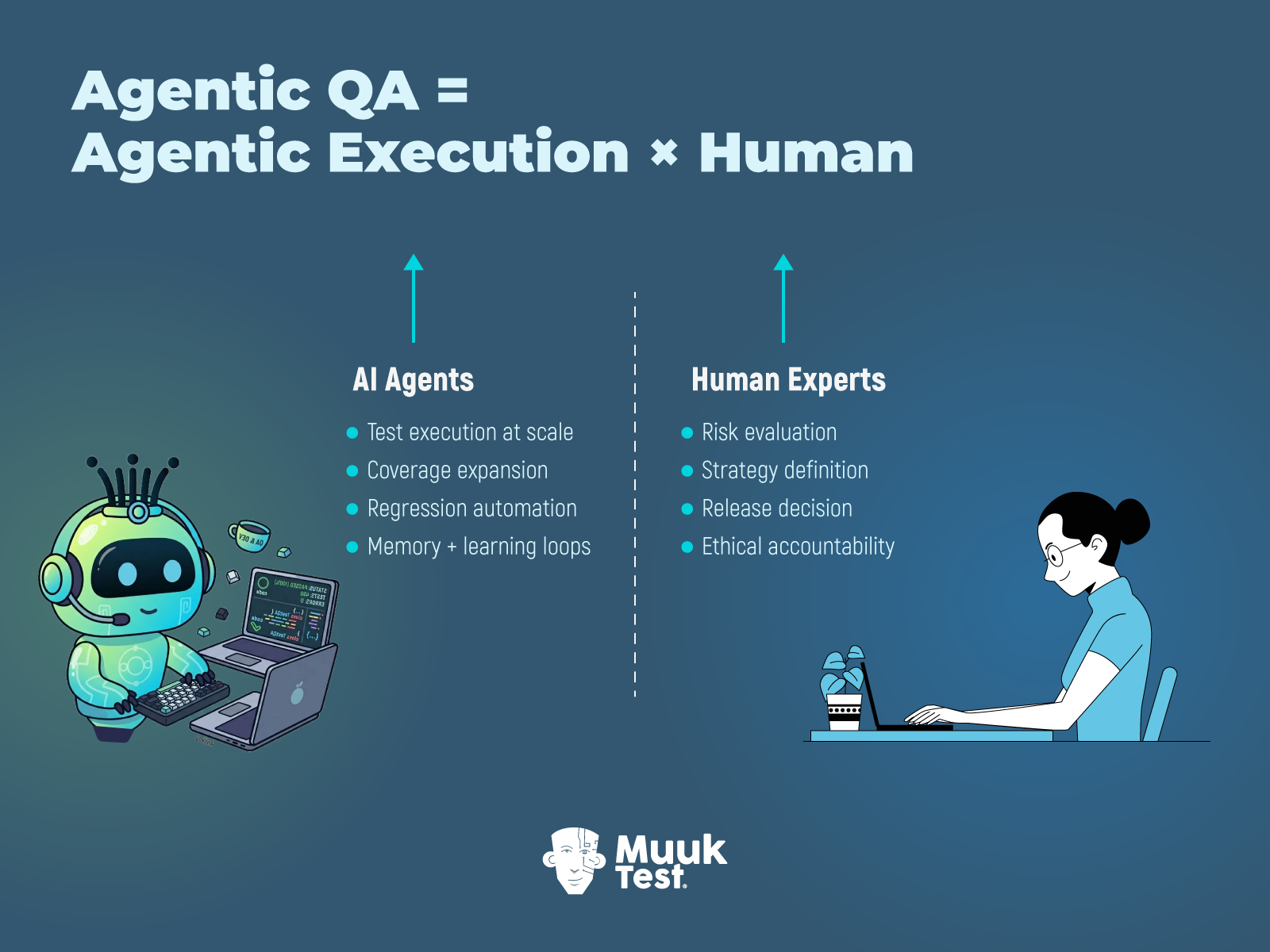

- Agentic QA combines AI agents with human expertise to scale software testing without losing judgment or accountability.

- AI agents handle execution at scale — expanding coverage, maintaining regression suites, and generating structured test artifacts.

- Humans retain decision authority — defining intent, evaluating risk, interpreting results, and making release trade-offs.

- Unlike autonomous AI QA, Agentic QA preserves human-in-the-loop oversight, reducing hallucinations, shallow coverage, and false confidence.

- The 80–20 model separates operational workload from strategic judgment, allowing teams to increase speed without outsourcing responsibility.

This post is part of a 4-part series, Fight Fire with Fire - QA at the Speed of AI-Driven Development:

1. What to Do When QA Can’t Keep Up With AI-Assisted Development

2. The Myth of AI-Only QA: Why Human Oversight Still Matters

3. Agentic QA: Combining AI Agents & Human Expertise for Smarter Testing ← You're here

4. Rewriting the QA Playbook for an AI-Driven Future - March 24th, 2026

Speed is easy to promise. Judgment is not.

AI-powered QA promises speed. Sometimes, it is impressive compared to a human tester. However, speed alone does not comprehend context, trade-offs, or recognize when a test fails, yet the product quietly fails its users, its intended purpose, or its ethics. That gap is not just theoretical. It shows up in real testing work every day.

Agentic QA is a concept designed to fill this gap. It combines AI agents with human expertise. Instead of pretending that intelligence can be automated end-to-end, Agentic QA makes a strategic and sane decision: let machines do what machines do best, and let humans do the work that they are best at doing.

AI agents handle the operational load. They execute. They observe. They repeat without fatigue. Humans step in where judgment matters. Where context shifts. Where risks compete. Where “passed” is not the same as “acceptable.”

In this article, we will:

- Understand what Agentic QA means in real testing work

- Learn how an AI testing agent works step by step

- Understand where AI agents fail without human expertise

- See how MuukTest Amikoo operationalizes Agentic QA using an 80–20 model

What Is Agentic QA in Software Testing?

Agentic QA is not a tool, but a concept. It is a division of responsibility. At its core, Agentic QA pairs AI agents with human expertise, not to blur roles, but to clarify them.

AI agents take on the operational weight. They execute tests. They scale coverage. They handle repetition without complaint or fatigue.

Humans do the rest. The harder part. They bring judgment. They supply intent. They carry the ethical responsibility for “tested well enough to release”.

Agentic QA = Agentic Execution × Human Judgment

Agentic Execution = Scalable test execution + Coverage expansion + Repetitive work at speed

Human Judgment = Intent setting + Result interpretation + Ethical ownership of testing decisions

× (Multiplication) = zero value if either side is missing.

This distinction matters because testing has never been about execution alone. Running checks is easy. Deciding what the results actually mean, and whether they reflect the truth about the product, is where testing earns its name.

Agentic QA makes that separation explicit. It allows teams to scale testing without manufacturing false confidence, to use AI for speed without outsourcing responsibility, and to keep humans accountable for the decisions that shape product quality.

Testers do not disappear in this model. They move ahead in the value funnel. Towards judgment, interpretation, and ownership.

How Agentic QA Differs from Traditional Test Automation and Autonomous AI QA

Most testing teams talk about AI as if everyone means the same thing. They don’t.

- Sometimes “AI” means scripted automation with better marketing.

- Sometimes “AI” means fully autonomous systems making decisions on their own.

- Often, it means a vague middle ground that nobody has clearly defined, but everyone is comfortable with investing in it.

This confusion creates poor decisions. Before you can evaluate Agentic QA, you need clarity on how it differs from what already exists. Agentic QA is not a cosmetic upgrade to existing automation, nor is it a softer version of autonomous AI. It represents a different way of structuring work, making decisions, and assigning accountability, and those differences directly affect risk exposure, quality signals, and the outcomes testing ultimately produces.

Here is a side-by-side comparison of Traditional Test Automation, Autonomous AI QA, and Agentic QA:

|

Dimension |

Traditional Test Automation |

Autonomous AI QA |

Agentic QA |

|

Core idea |

Script-based execution of predefined checks |

AI-driven checks with minimal or no human involvement |

AI agents working with human expertise and supervision |

|

Primary goal |

Repeatability and regression coverage |

Maximum autonomy and scale |

Balanced scale and judgement |

|

Role of AI |

Assists test script authoring |

Generates, executes, and evaluates AI checks on its own |

Reasons, plans, and generates testing artifacts |

|

Role of expert testers |

Design, maintain, and debug scripts |

Mostly none from day-to-day testing. |

Define intent, review output, make judgments, and steer learning |

|

Adaptability to change |

Low. Scripts break as systems change |

High, but risky. Non-deterministic |

High, with bounded responsibility |

|

Handling of ambiguity |

Poor. Requires explicit instructions |

Appears confident but suffers from AI (LLM) syndromes. |

Managed through agent constraints and a human in the loop. |

|

Approach to test design |

Static and upfront |

Dynamic but uncontrolled |

Dynamic and guided by human intent |

|

Learning over time |

None. Scripts do not learn |

Learns, but without accountability. Context changes over time. |

Learns within defined boundaries |

|

Ethical responsibility |

Implicit, and with the testing team |

Weak or undefined |

Explicit and preserved |

|

Risk of false confidence |

Low. Can arise from brittle automation or poor assertions. |

High due to hallucination and self-greening risks. |

Moderate through controlled collaboration and a continuous review loop |

How AI Testing Agents Work (Step-by-Step Example)

To understand Agentic QA, you must first understand how an AI agent actually operates inside a testing workflow.

This section breaks that operation into clear steps. Each step shows what happens internally. Each step is paired with a concrete example of a test case design agent in action.

Step 1: Context setting and knowledge base formation

An AI testing agent begins by setting the context. These inputs form its working knowledge base.

Typical inputs include:

- Requirements

- User stories

- Screenshots

- API specifications

- Existing test cases

- Workflow steps, etc.

At this stage, the agent is not generating anything. It is collecting, organizing, and defining the boundary of the feature it is allowed to reason about. The goal here is scope, not insight.

Test case design agent example

The agent shares useful context information of the application under test, such as:

- A sample user story

- API specifications

- Existing happy-path test cases

- Screenshots of the UI or Wireframe images, etc.

Together, these inputs define the feature boundary within which the agent will operate.

Step 2: Preprocessing and feature understanding

After the knowledge base and context information is loaded to the agent, preprocessing starts automatically.

The agent starts with the following tasks:

- Parsing the inputs

- Extracting key details

- Identification of key elements and entities

- Mapping of dependencies

- Establishing relationships

Raw artifacts are converted into a structured internal representation that the agent can reason over. This step creates understanding, not conclusions.

Test case design agent example

From the data loaded in the previous step, the agent identifies key elements such as:

- Cart functionality

- Pricing functionality

- Discounts functionality

- Payment method functionality

- Order confirmation functionality.

It also maps dependencies such as:

- Payment depends on cart state

- Order confirmation depends on the payment outcome

Step 3: Prompt-based task activation

After understanding the feature, the agent awaits the task to be done. The task is supplied through a user prompt or a preconfigured set of instructions (system prompts) that define the task boundary. The prompt acts as a direction for the task.

Examples include:

- Design boundary tests for this API

- Identify risks and missing cases

- Generate test scenarios for checkout flow

The prompt activates the task.

Test case design agent example

The agent could be queried with a user prompt such as:

“Identify missing test scenarios and risk areas in the checkout flow.”

This sets direction while leaving room for exploration and judgment within the defined scope.

Step 4: Planning and task decomposition

Before producing any output, the agent plans.

It decomposes the task into smaller reasoning steps, such as:

- Understanding feature behavior

- Identifying test ideas

- Applying relevant test techniques

- Validating them against known constraints

Planning is what separates reasoning from guesswork. It gives a structure to work against.

Test case design agent example

The agent plans to:

- Review checkout rules and conditions

- Identify boundaries in price, quantity, and payment states

- Apply negative and boundary-focused test techniques

- Compare against existing coverage

Step 5: Retrieval and tool-assisted reasoning

To ground its reasoning, the agent retrieves supporting context.

This may include things that are available in its knowledge base, such as:

- Requirements

- Existing test cases

- Known issues

- Risk lists

- Domain rules, such as ranges and validations

Retrieval reduces unsupported assumptions and anchors reasoning in historical and domain knowledge.

Test case design agent example

The agent pulls:

- Previous checkout defects

- Known payment failures

- Discount rules

- Existing regression coverage

These signals inform which areas deserve deeper testing and attention.

Step 6: Structured output generation

With planning and context in place, the agent generates output.

The output consists of structured testing artifacts such as:

- Test scenarios

- Edge cases

- Negative cases

- Preconditions and postconditions

- Test data suggestions

- Coverage gaps

Test case design agent example

The agent generates:

- Scenarios for invalid payment responses

- Edge cases and risks around discount thresholds

- Missing retry and timeout flows

- Gaps in coverage for partial failures

The output is organized and can now be reviewed and refined by a human tester.

Step 7: Memory update and continuity

Finally, after one iteration, the agent updates its working memory.

It records generated scenarios, feature relationships, task history, and context state. This memory supports continuity across future interactions and prevents unnecessary duplication. Memory enables incremental progress.

Test case design agent example

The agent updates its memory and stores:

- Checkout scenarios generated till now

- Relationships between payment and order confirmation

- Previously explored risk areas

This allows future prompts to build on past work instead of repeating it.

Why AI Agents Fail Without Human Expertise

AI agents can analyze large inputs, plan complex tasks, and generate output at a scale no human team can match. That capability is real. And it is useful to some degree. The problem begins when their output is treated as truth instead of what it actually is, i.e. a starting point.

In real testing work, AI tool failures rarely show up as obvious errors. There is no red alert. Instead, the failure is subtle. It seeps into test coverage, influences trust, and quietly nudges teams toward decisions that feel justified but are grounded in incomplete understanding.

Tests exist. Reports look thorough. Dashboards feel reassuring. And yet, important risks remain unexamined. This happens because AI agents do not know when their understanding is shallow, when context is missing, or when a result should be challenged rather than accepted.

This section focuses on some common failure points where AI output blends into normal workflows and mistakes are absorbed silently.

Hallucination disguised as completeness

AI agents can confidently generate test cases, scenarios, and reports that appear thorough, structured, and finished, even when parts of that output rest on incorrect assumptions, missing context, or quietly invented details.

Impact on testing

- Test coverage appears strong on paper, but includes features and elements that are not even part of the test scope.

- Real gaps and missing information stay hidden, masked by allucinated information.

Incorrect assumptions

AI agents infer behavior from patterns in the data they see. When system boundaries are vague, undocumented, or inconsistently described, the agent fills the gaps on its own based on other patterns that it is trained on.

Those assumptions feel reasonable. But they are often wrong.

Impact on testing

- Task focus goes on the wrong topics, while the unknown parts receive minimal attention.

- Cross-system risks are quietly deprioritized because they fall outside the agent’s assumed scope.

- Failures surface only after release, when real users trigger interactions that the AI tests never truly covered.

Over-weighting happy path scenarios

Most inputs available to an AI agent describe how the system is expected to work. Requirements, user stories, and acceptance criteria overly focus on success. AI agents try to mirror it. The result is predictable. Happy paths dominate.

Impact on testing

- Edge cases receive no attention.

- Failure paths remain under-tested, even though they carry the highest user and business risk.

- Confidence accelerates faster than coverage.

Context decay across iterations

AI agents rely on stored context and accumulated memory to maintain continuity across tasks. Over time, as the product evolves and behaviors shift, that context can quietly drift away from how the system actually works today.

Nothing breaks immediately. The decay is gradual.

Impact on testing

- Obsolete scenarios reappear in new outputs, adding noise without increasing insight.

- Trust in the generated output erodes, and teams begin to treat the agent as a liability.

The 80–20 Operating Model of Agentic QA

Agentic QA operates on a deliberate split of responsibility. Not equal. Not blurred. But clear by design. The goal is to scale testing work without outsourcing judgment.

AI agents handle operational work

AI agents take on the bulk of repeatable, execution-heavy activity. This accounts for roughly 80 percent of their contribution and focuses on expanding and maintaining testing capacity.

Around 80 percent of work lies in:

- Refactoring and maintaining test code

- Expanding reviewed test ideas into executable checks

- Drafting reports, coverage maps, and supporting artifacts

Around 20 percent of work lies in:

- Fixing broken scripts where context is already well understood

- Proposing risk areas based on detected changes and historical signals

This work scales volume and consistency, not authority.

Humans own judgement and strategy

Humans remain accountable for quality decisions. Their 80 percent is not execution. It is thinking, prioritization, and responsibility.

Around 80 percent of work lies in:

- Defining test strategy and quality goals

- Evaluating and challenging AI-generated output

- Questioning assumptions and reframing risk

- Making release trade-offs under real constraints

Around 20 percent of work lies in:

- Hands-on exploratory testing

- Feeding domain context the agent cannot infer

- Seeding constraints, heuristics, and test charters

This model supports continuous testing across the SDLC. It also enables accountability in the QA process.

How MuukTest Amikoo Puts Agentic QA into Practice

MuukTest Amikoo puts Agentic QA into practice through its E-A-T (Expert - Amikoo - Testing Platform) model, in which AI agents and QA experts operate as a single, coordinated system rather than as separate roles.

The model follows the 80–20 split between AI Agents and QA Experts. The experts stay focused on judgment-heavy work, and in parallel, Amikoo takes care of the operational load.

During execution, Amikoo runs hundreds of tests in parallel while QA experts interpret results, challenge signals, and assess release risk. This structure enables scale without sacrificing accountability and supports continuous testing across the SDLC, from early planning through production.

MuukTest Amikoo E-A-T Model

The Strategic Shift Beyond Agentic QA

Agentic QA proves something important: intelligence at scale still requires responsibility.

It restores balance in a world where AI accelerates everything except accountability.

Teams that treat AI agents as authority will eventually pay for misplaced confidence. Teams that design judgment loops deliberately will scale without losing control.

But adopting Agentic QA is only one layer of the shift.

In the final part of this series, we zoom out and examine how QA must evolve strategically to thrive in AI-driven organizations.

Frequently Asked Questions

What is Agentic QA in software testing?

Agentic QA is a testing model in which AI agents handle scalable execution, while human experts retain judgment, risk evaluation, and release accountability. Instead of replacing testers, Agentic QA separates operational work from decision-making authority, balancing automation speed with human oversight.

How is Agentic QA different from autonomous AI testing?

Autonomous AI testing aims for minimal human involvement, allowing AI systems to generate, execute, and evaluate tests independently. Agentic QA, in contrast, preserves human-in-the-loop oversight. AI agents assist with execution and coverage, but humans define intent, validate outputs, and make final release decisions.

What does an AI testing agent actually do?

An AI testing agent collects contextual inputs such as requirements and existing test cases, processes dependencies, plans tasks, retrieves relevant knowledge, and generates structured testing artifacts like scenarios, edge cases, and coverage gaps. It can also update memory across iterations to improve continuity in testing workflows.

Why do AI agents need human oversight in QA?

AI agents operate probabilistically and may generate outputs based on incomplete context or incorrect assumptions. Without human oversight, subtle risks, hallucinated coverage, or shallow happy-path testing can go unnoticed. Human judgment ensures that results are interpreted correctly and aligned with real business risk.

What is the 80–20 model in Agentic QA?

The 80–20 model in Agentic QA divides responsibility between AI agents and human experts. AI handles roughly 80% of execution-heavy tasks such as test expansion and maintenance, while humans focus on strategy, risk evaluation, and accountability. This split enables scale without outsourcing judgment.

Is Agentic QA suitable for high-velocity engineering teams?

Yes. Agentic QA is particularly suited for AI-driven and high-velocity development environments. By allowing AI agents to scale operational testing while humans retain control over quality decisions, teams can increase coverage and speed without increasing false confidence or release risk.