-

AI-assisted development increases delivery speed, but testing velocity often stays the same, creating a growing QA velocity gap.

-

When QA can’t keep up, quality debt builds silently. Untested paths reach production, release confidence drops, and customer feedback becomes reactive.

-

Continuous testing closes the velocity gap by moving QA earlier into ideation, planning, development, CI, and post-release monitoring.

-

AI can accelerate testing tasks such as test case generation, regression automation, and test data creation, but expert judgment must stay in the loop.

-

The future of QA in AI-driven teams is QA-in-the-loop, not QA-as-a-gate, embedding risk awareness into decisions rather than waiting until the end.

This post is part of a 4-part series, Fight Fire with Fire - QA at the Speed of AI-Driven Development:

1. What to Do When QA Can’t Keep Up With AI-Assisted Development ← You're here

2. The Myth of AI-Only QA: Why Human Oversight Still Matters

3. Agentic QA: Combining AI Agents and Human Expertise for Smarter Testing - March 18th, 2026

4. Rewriting the QA Playbook for an AI-Driven Future - March 24th, 2026

QA didn’t get worse. Development got faster. We are officially in the AI-assisted development world. You might have already started to see some of the symptoms.

The sprints feel tighter. Reviews stack up quickly. Code moves from idea to merge faster than QA can react. Pull requests pile up. Releases happen more often. QA capacity stays the same. This is not a productivity issue. It is a QA scalability and strategy problem. And fixing it changes how QA fits into modern development.

AI-assisted coding changed how fast software gets built. Most QA and test automation strategies are still trying to keep pace with these new changes. The result is a widening gap between development velocity and testing readiness. If development feels faster but risk feels higher, this article is for you. We will see how vibe coding and AI-assisted development change testing needs. We will also learn how to stay in control without slowing your teams.

Development Accelerated. QA Did Not.

Modern software development is evolving rapidly and looks very different now.

AI copilots and assistants are integrated live inside the IDEs. Developers can generate working prototypes and bug fixes in minutes. Refactors that once took hours now take minutes. Experiments are getting cheap. Iteration cycles are speeding up.

The Stack Overflow Developer Survey 2025 - AI, reflects this shift clearly. Over 80% developers report regular use of AI-assisted coding tools. The AI tooling sentiments are overwhelmingly positive. They experiment more. Vibe coding is also getting picked up slowly. Currently, it’s around 15 to 20%, but the sentiment shows the numbers might only increase from here. However, the traditional testing speed isn’t increasing at the same rate.

You do not need survey data to believe this. Your backlog probably already shows it.

- More commits per sprint

- Shorter merge and release cycles

- Higher change frequency across the codebase

- Test automation coverage is still low.

- A lot of testing work still goes into producing heavy documentation for tests, cycles, plans, etc.

Development velocity rises. Testing velocity isn’t increasing at the same pace. This creates a velocity gap (or delta).

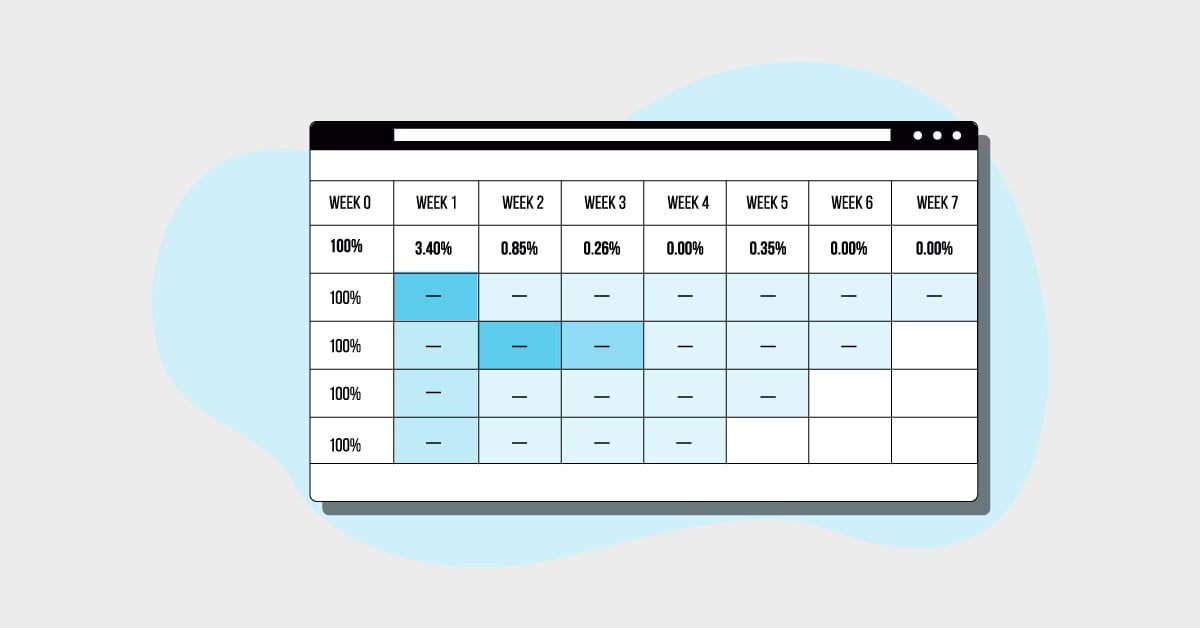

Velocity Delta (🔺) = Development Velocity − Testing Velocity.

Velocity Delta shows the gap between how fast features are built and how fast they are actually tested.

Case 1: If 🔺 is small, testing keeps up with development. Feedback is timely.

Case 2: If 🔺 grows, testing lags. Untested code piles up. Release confidence drops.

Case 3: If 🔺 explodes, you are in danger mode. The feedback cycle is in a different loop altogether.

A rising velocity delta is an indicator of quality debt.

- It risks customer satisfaction and can also lead to lost revenues.

- Feedback arrives late. Conversations shorten. Testing shifts from investigation to verification.

- Code that “looks fine” starts going into the production. Not because it is safe, but because there is no time to explore deeper risk. This leads to more defects reaching production.

- Regression scope becomes unclear. Untested paths reach critical workflows.

- Customers find issues before testers do. Support tickets or dissatisfied customers become your primary feedback loop.

- Releases feel risky, not because failure is expected, but because risk is no longer understood.

- Soon, revenue impact shows up through churn, refunds, and bad sentiments.

When development accelerates, but testing doesn’t, it becomes a bottleneck. It’s not because testers are slow or unskilled, but because traditional QA models are still positioned in the past.

Why Vibe Coding Breaks Traditional QA Models

Vibe coding is an emerging and popular technique in the software development world.

It is exploratory. Developers use AI-assisted coding to try ideas quickly, generate working prototypes, and iterate rapidly with minimal friction. The vibe matters. The flow matters.

However, traditional QA models are not built for this mode of development. It is still rooted in stable requirements, predictable workflows, deterministic test cases, and a dedicated testing period once the code freezes.

Hiring more testers can also not solve this. The shift required cannot be solved by adding more testers. It can be solved by adapting testing to this modern development lifecycle.

When QA models stay rigid, feedback arrives late, context is lost, and real risks stay hidden behind mock demos. To support modern development, we need a rapid software testing approach.

Short loops. Fast experiments. Lightweight oracles. Less ceremonies. More feedback.

Continuous Testing to Close the Velocity Gap

When development speeds up through AI-assisted coding, testing cannot keep pace as a final checkpoint. Waiting until the end creates a lag in the system. Continuous testing removes that lag.

Continuous testing works because it changes where and when testing happens.

Instead of concentrating the entire testing effort at the end, it spreads testing across the development flow. Small checks at each activity stage substitute big surprises at the end. Fast feedback arrives while decisions are still reversible.

This is how continuous testing reduces the velocity gap without slowing teams down.

1. Testing While Ideas Form (Ideation Phase)

Risks enter our software systems even before the code exists. Backlog discussions shape what gets built and how it behaves. Testing after implementation increases the cost of reworking new ideas. If there are gaps or holes in the concept, it will lead to critical risks when the software is implemented in reality. That’s why testing at this stage helps save on a lot of hassle.

At this stage, testing helps by asking simple but critical questions.

- What could fail in real use? (so that we can consider that as part of our strategy)

- What matters most to users? (so that we can define our minimum viable product scope)

- Which scenarios are unclear or underspecified? (so that we can gain more clarity on them before jumping into the code first)

- Where are assumptions hiding behind the story? (so that we can uncover the hidden and tacit requirements)

In short, you test the idea, not the code.

When testers participate early, stories gain clarity. Ambiguity gets resolved while changes are still inexpensive. Clarity gained here often prevents days of rework later.

2. Testing During Planning (Before Code Exists)

This is where continuous testing starts to influence outcomes directly. Here, testers bring early test ideas into planning conversations. They highlight risky paths, dependencies, and scenarios that deserve attention.

- Edge cases surface before development begins.

- Teams align on what “done” actually means.

- Acceptance criteria become testable statements instead of vague expectations.

- Developers build with test criteria in mind. They know what matters before they start coding.

- Bug prevention begins here, long before the first test runs.

Including testing from planning triggers the testing mindset right from the start. It saves a lot of thinking time at the end.

3. Testing While Code Takes Shape (Development Phase)

AI-assisted coding has accelerated the pace of this phase. We are moving from a creation bottleneck to a feedback bottleneck. Continuous testing keeps feedback close to creation.

- Early increments get tested as they appear via exploratory tests.

- Exploration and test design run alongside implementation instead of waiting for completion.

- This enables fast feedback. Also, the context stays fresh for the entire team.

- Pair testing works well here. Developers and testers see changes together.

- Risks surface while fixes are still small.

If this is done well, learning accelerates. This stage is where testing shifts from detection to understanding.

4. Testing During Final Checks (Regression & CI Phase)

This is the stage where you check your safety nets. You want to make sure that there are hidden smokes in the system.

- Automated regression checks run as part of Continuous Integration (CI).

- Security scans in CI as part of the developer pipeline, looking for exposure.

- Performance tests signal app readiness. These checks act as guardrails, not guarantees.

- Human exploratory testers test out crucial flows and features.

Automation shines here because it handles repetition well. It catches obvious system breakage. It protects teams from AI-generated noise that slips through earlier stages.

Final checks work best when they confirm understanding built earlier, not when they are expected to discover everything. Remember, this can only work if testing is part of the previous phases.

5. Testing After Release (Shift-Right Phase)

Continuous testing does not stop at release. It moves way beyond it so that we can catch any critical issue before your customer does.

- User acceptance testing gathers real feedback.

- Alpha and beta users validate assumptions in real environments.

- Monitoring and observability surface issues that are hard to predict outside the field.

- Logs, usage patterns, and performance signals keep giving valuable feedback.

This is how continuous testing closes the loop.

Continuous testing shortens feedback cycles at every stage of software development. It keeps risk visible right from the start. It reduces last-minute surprises. It allows QA to scale with AI-assisted development without becoming a bottleneck.

That is how QA stays relevant when development speed keeps rising.

.png)

Embracing AI in Testing and Automation

AI is not just an accelerator for development but also for repeatable testing tasks.

Embracing AI-assisted testing requires a unique skillset and mindset as a tester. The Experts in the loop along with AI agents yields a google balance of judgement and efficiency. Here is the MuukTest’s E-A-T model that enables embracing AI in Testing.

Leverage AI to handle repetitive testing tasks such as:

- Generating test data

- Drafting test cases from stories or commits

- Running basic UI checks

- Revieweing code standards

- Refactoring test scripts

These activities reduces the friction of inaction. However, they do not replace the expert judgment as it works along with an expert in the loop.

If you want to do only one thing, start with using AI to assist test automation. It will offload repetitive checks so QA can move earlier into the development loop. It will reduce the overall velocity delta more than adding more QA will ever will.

That is how QA can scale without slowing development.

From QA-as-a-Gate to QA-in-the-Loop

The future of QA is not at the end. It is spread across the QA lifecycle. QA-as-a-gate waits for work to finish. QA-in-the-loop shapes decisions as work happens. It’s like a live feedback engine.

In AI-driven teams, human strengths are a core differentiator. Experts will have to take care of:

- Judgment

- Risk awareness

- Usability thinking

- Impact assessment

- Meaningful work

Not every team is ready for this shift. Not every tester can adapt at the same pace. That is normal. Many scaling teams work with partners like MuukTest to strengthen QA scalability, remove mechanical testing load, and give QA leaders space to focus on higher-value testing work instead of firefighting.

.jpg)

The Cost of Standing Still in AI-Assisted Development

“In the software business, it takes all the running you can do, just to stay in the same place.”

Gerald M. Weinberg

AI-assisted coding made delivery faster. It also made it easier to ship mistakes. If QA does not evolve, the velocity gap widens. Trust erodes quietly. Revenue loss starts slowly.

There is no magic pill for success. Even standing still comes at its own cost. Teams that succeed amidst all the factors keep testing close to decisions, close to code, and close to its users. If you also feel that QA can’t keep up with your AI-assisted development, it’s time to re-examine your strategy.

But as QA evolves, another important question arises: Can AI handle QA on its own? In Part 2 of this series, we’ll examine the limits of AI-only QA and why expert judgment becomes even more critical as automation advances.

Happy testing!

Frequently Asked Questions

Why can’t QA keep up with AI-assisted development?

AI-assisted development dramatically increases the speed and volume of code changes. Features, refactors, and experiments move faster than traditional QA processes were designed to handle. When testing is positioned as a final checkpoint rather than a continuous activity, a velocity gap forms between development and testing. The issue is not tester productivity; it's the mismatch between development speed and QA strategy.

What is the QA velocity gap?

The QA velocity gap is the difference between how fast software is developed and how fast it is meaningfully tested. When development accelerates through AI tools, but testing capacity stays the same, untested changes accumulate. Over time, this gap leads to quality debt, delayed feedback, and lower confidence in releases.

How does continuous testing help AI-driven teams?

Continuous testing spans the entire development lifecycle, from ideation and planning to CI and post-release monitoring. Instead of relying on large testing cycles at the end, teams receive smaller, faster feedback loops. This reduces risk early, shortens defect resolution time, and helps QA scale with AI-assisted development without becoming a bottleneck.

Can AI replace QA engineers?

AI can accelerate repetitive testing tasks such as generating test cases, creating test data, maintaining regression scripts, and running automated checks. However, AI cannot replace human judgment. Risk assessment, usability evaluation, edge-case thinking, and business impact analysis still require expert insight. The most effective model is expert-in-the-loop — combining AI efficiency with human decision-making.

How can QA scale without hiring more testers?

Scaling QA is less about adding headcount and more about changing positioning. Moving QA earlier into planning and development reduces rework. Automating repeatable regression tasks frees capacity. Embedding testing into CI/CD pipelines shortens feedback cycles. When QA shifts from gatekeeper to feedback engine, teams can increase quality coverage without proportionally increasing team size.

What happens if QA does not adapt to AI-driven development?

If QA remains structured around slower, sequential workflows, the velocity gap widens. Feedback arrives too late. Quality debt accumulates. Releases feel riskier. Over time, customer support becomes the primary detection mechanism for defects. Trust erodes gradually, and recovery becomes more expensive than prevention.

.jpg)