- AI-only QA is a myth. While AI tools can generate and execute tests, they lack judgment about business risk, customer impact, and product intent.

- AI systems have predictable failure modes, including hallucinations, shallow coverage, self-greening, and context gaps that create false confidence.

- Without human oversight, AI-only testing quietly accumulates quality debt, amplifying green signals without improving the reliability of the real system.

- Human-in-the-loop QA combines AI speed with expert judgment, ensuring critical thinking, risk awareness, and meaningful coverage.

- AI works best as an augmentation force, accelerating repetitive tasks while humans retain ownership of quality decisions.

This post is part of a 4-part series, Fight Fire with Fire - QA at the Speed of AI-Driven Development:

1. What to Do When QA Can’t Keep Up With AI-Assisted Development

2. The Myth of AI-Only QA: Why Human Oversight Still Matters ← You're here

3. Agentic QA: Combining AI Agents and Human Expertise for Smarter Testing - March 18th, 2026

4. Rewriting the QA Playbook for an AI-Driven Future - March 24th, 2026

You are most likely already hearing it everywhere. AI is getting smarter. It will soon replace QA fully. Every week, you will see the launch of yet another game-changing AI-powered QA tool that can write tests, run them, and report the results.

The marketing is convincing enough to believe that one can soon remove human testers from the testing loop. It's a tempting belief. But it causes real damage in the long run.

This article walks you through why AI-only QA is a myth, where AI tool limits show up in practice, and why expert-in-the-loop QA is a must. You will learn how to use AI testing without letting it quietly erode quality, trust, and judgment.

What Is AI-Only QA?

AI-only QA is a testing approach where artificial intelligence tools create, run, and evaluate tests without human oversight. While AI can automate test generation and analysis, it lacks judgment about business risk, user impact, and product intent. Without experts in the loop, AI-only QA can lead to shallow coverage and false confidence.

Why AI-Only QA Sounds Convincing

AI is no longer just a tech concept. It is a marketing phrase and a heavily loaded one. It is used in different contexts to mean different things.

Most people, when they say AI, are actually talking about Machine Learning (ML) or Generative AI (Gen AI) capabilities. Gen-AI tools can generate text, summarize it, and spot patterns. These capabilities make it look intelligent because all of it happens with incredible fluency.

However, this fluency is a trap. AI systems do not reason the way you do.

They predict outputs based on probability and statistics. They use the large data (LLMs) that they are trained on as a reference to make those predictions. They do not understand your product intent, customer pain, or business risks unless you specifically inject it.

When teams remove expert human testers from the AI testing workflows, they confuse speed with safety. This leads to Automated Irresponsibility because AI won’t take the responsibility for the work it produces.

The Limits and Syndromes of AI in QA Systems

Before you design any AI in QA strategy, you need to understand how these systems fail. A good strategy would account for the limitations of AI systems and balance it with human guardrails so that you don’t compromise on quality.

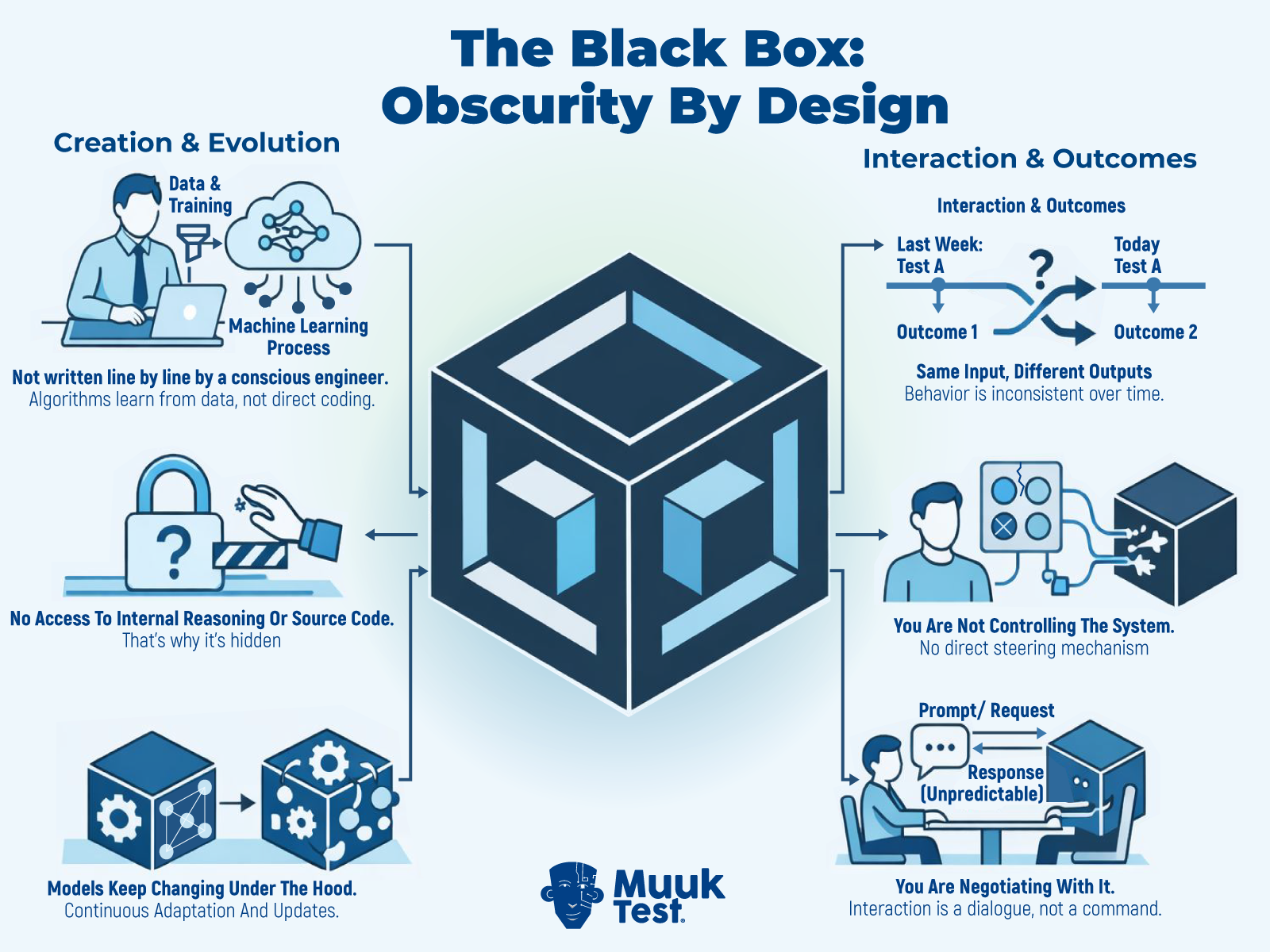

AI as a Black Box: Obscure by Design

AI algorithms are not written line by line by a conscious engineer. You don’t have access to the internal reasoning or source code. Models keep changing under the hood. At the same time, new models are being introduced in the market at a furious rate. You can check the AI models timeline here.

A test that behaved one way last week may behave differently today. You cannot step through logic the way you do with code. You often inspect prompts, context, and training behavior instead.

You are not controlling the AI systems. You are at best negotiating with them.

The Black Box design of AI systems

Indeterministic AI Behavior and the Loss of Predictability

Determinism has been a core anchor in how we build and test software systems.

You change something. You know what would happen. The system responded the same way every time, because that is what it was built (coded) to do.

With AI, that world is slipping. Now, a minor shift of words (prompts), value (data), or situation (context) can tilt the entire outcome. This makes AI systems behave less like a reliable machine and harder to trust.

For testing, that is dangerous. Consistency matters. Regression benchmarks matter. Determinism matters.

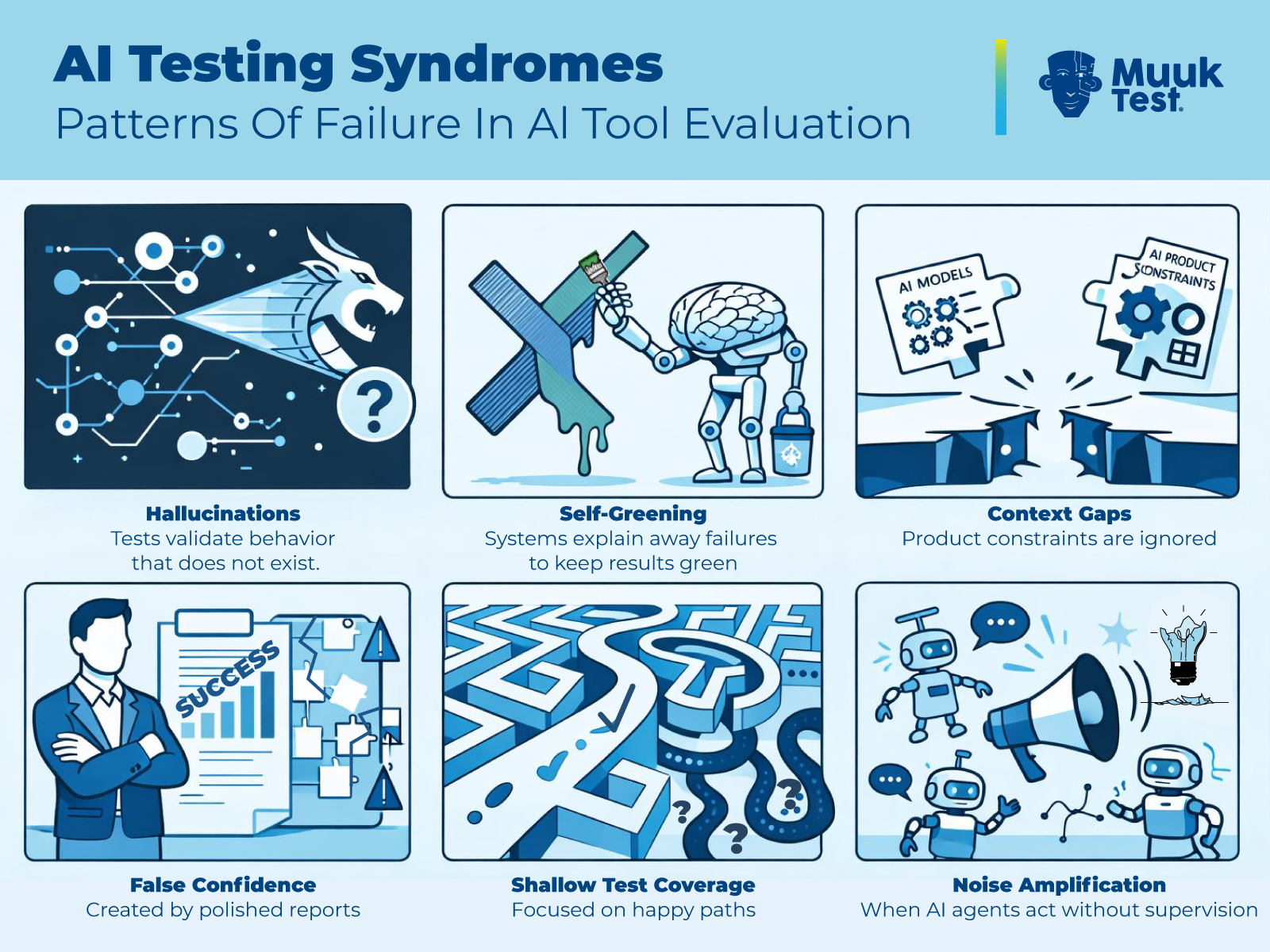

Common AI Testing Syndromes

Testing experts have evaluated various syndromes and patterns that repeat with AI tools. These are known as “syndromes” because they are chronic and appear to be a part of the AI tools. Popular syndromes include:

- Hallucinations, where tests validate behavior that does not exist or check for expectations that were not part of the original test case.

- Self-greening, where systems fix assertions instead of tests to meet the goal of keeping results green.

- Context gaps, where product constraints are ignored, or earlier outputs are not remembered in upcoming iterations.

- Placation, where AI changes its answers whenever any concern is shown, or when follow-up questions are answered.

- Shallow test coverage focused on happy paths instead of questioning the assumptions.

- Noise amplification occurs when AI tools produce information that is not congruent with the user's needs.

These are not edge cases. They are normal failure modes. Ignoring them creates quality debt and risks.

Various popular AI tool syndromes in QA

How AI-Only QA Builds Hidden Quality Debt

Tech and Quality debt is like financial debt. Some of it is deliberate and short-term. One that helps us address the immediate needs at hand. However, AI tools often lead you to accidental and long-term debts.

AI tools:

- Optimize for completion, not consequence.

- Optimize for current goals. They do not worry about future failures.

- Suffer from multiple LLM syndromes and limitations.

When AI agents are used in isolation from a human expert:

- They end up generating more tests without improving coverage quality.

- They report more data, but it doesn’t increase system insight.

- They lack skepticism and critical evaluations.

- They amplify green signals.

This looks fine to start with. But you end up paying interest on quality debt later. Usually in production.

That is why the MIT report 2025 suggests that over 95% Gen AI agents fail when scaled. They are wrappers around systems that lack judgment rooted in history, context, and experience.

Why Human Judgment Matters More Than Ever in AI QA

Human oversight is not about resisting progress or the adoption of tools. It is about understanding risk and creating a strategic alignment that fits your business needs.

AI tools can mimic technical fluency. They cannot replace lived expertise. Human expert testers do something AI still cannot, i.e.:

- Interpret ambiguity and raise alarms

- Investigate why a result feels wrong

- Connect failures to customer impact and business risks

- Change strategy mid-test or abandon testing if required

- Critically think about test results.

Skepticism is a skill. Healthy doubt protects systems and user trust. Blind belief weakens them. You are not slow because you think. You are careful.

This care is the real strength of AI-assisted QA.

AI as an Augmentation Force in QA

AI in QA works best when it extends human capability.

If your system fails, your customers won’t blame the AI model. They will blame you for trusting it blindly. Strong QA systems accept this reality. That’s why they design expert-in-the-loop QA systems. They treat AI in testing as a leverage, not an authority.

Think of AI models as stored experience and skills. Humans also operate with internal models and diverse skills built through practice and failure. Hybrid QA combines both.

Real hybrid QA use cases include:

- AI suggests test ideas that a human filters and refines

- AI summarizes logs that a human interprets and maps to business risks

- AI highlighting risky areas that a human explores through focused charters

- AI accelerating regression checks, a human tester focuses on exploration

Strategic augmentation beats full delegation.

Human-in-the-Loop Checklist for AI-Assisted QA

This checklist is meant to be used during real testing work, not read once and forgotten. Apply it every time AI contributes to test design, execution, analysis, or reporting. The goal of this checklist is to keep judgment with humans while using AI for speed and assistance.

1. Mandatory human review

AI outputs are fast but shallow unless a human takes responsibility for understanding them.

- Read every AI-generated output end to end before putting it to any use

- Re-read AI-outputs before sharing with other members in your team

- Do not approve based on summaries, highlights, or green indicators

2. Critical thinking gate

AI can sound confident even when it is wrong. This step forces deliberate skepticism.

- Ask youself if this makes sense for your specific product

- Look for things that might be missed or omitted by AI

- Consider what would break if this output is wrong (risk analysis)

3. Assumption and hallucination check

AI often fills gaps with plausible answers. This check exposes those gaps.

- List known assumptions in the prompt to AI systems

- Validate output against:

- Trusted references (Oracles)

- Real data and requirements

- Project context

- Treat unverifiable information as hallucinations

4. Coverage depth check

AI testing tends to favor success paths. This step protects against shallow coverage.

- Confirm failure paths and invalidation tests are also included

- Check for edge cases and boundary conditions

- Reject duplicate or redundant outputs from AI interactions.

5. Quality scan

Low-quality AI output follows recognizable patterns. Spotting them early saves time.

- Watch out for generic ideas that fit any system

- Flag patterned or overly polished language

- Reject outputs lacking your product-specific detail

6. Human control enforcement

How you interact with AI determines how much control you retain.

- Use ask mode, not agent mode

- Break work into small, clear tasks that AI can take up

- Ask it to ask you clarifying questions before starting work on any task

7. Ownership and documentation

Quality decisions must always belong to a human, not a system.

- Assign a named human owner for every decision

- Document where AI contributed. Document its role in your test strategy

- Final release judgment should always be with humans

This checklist is designed to protect your decision-making authority. AI can help you move faster. But, you will always remain accountable for the quality of the work that you get done with AI systems.

The Decision You Cannot Automate Away in AI QA

Every AI testing system reflects a choice. A choice about what you trust. A choice about who decides when software is ready. You cannot outsource that choice to a dynamically changing algorithm whose behaviour is obscure, and for which you don’t have much control.

The teams that win with AI-assisted QA are not the ones chasing autonomy. They are the ones designing judgment loops.

But what does a well-designed judgment loop actually look like in practice? In Part 3 of this series, we explore Agentic QA: a model in which AI agents operate continuously under expert guidance, combining automation at scale with human accountability.

This is your call to action:

- Reassess your AI testing strategy.

- Design expert-in-the-loop QA deliberately.

- Use AI to move faster, not to think less.

Quality still belongs to you!

Frequently Asked Questions

What is AI-only QA?

AI-only QA is a testing approach where artificial intelligence tools design, execute, and evaluate tests without meaningful human oversight. In this model, AI systems generate test cases, interpret results, and report outcomes independently. While this can increase speed, it removes human judgment from critical quality decisions.

Why is AI-only QA risky?

AI-only QA is risky because AI systems optimize for output completion, not real-world consequence. They can produce polished results that appear correct but lack depth, context awareness, or risk sensitivity. Without human review, teams may develop false confidence in test coverage and system reliability.What are common failure modes of AI in QA?

What are common failure modes of AI in QA?

AI testing tools commonly exhibit failure patterns such as hallucinated test validations, shallow happy-path coverage, self-greening behavior, and context gaps. These are not rare edge cases but predictable limitations of probabilistic systems. Understanding these syndromes is critical before building an AI-driven QA strategy.

Why are AI systems less predictable than traditional test automation?

Traditional automation is deterministic meaning the same input produces the same output. AI systems are probabilistic, meaning small changes in prompts, data, or context can produce different results. This non-deterministic behavior makes regression stability and repeatability more complex without human oversight

What is human-in-the-loop QA?

Human-in-the-loop QA is a model where AI assists with speed and automation, but humans retain decision-making authority. Experts review AI outputs, validate assumptions, question inconsistencies, and assess business risk. AI accelerates execution, while humans protect judgment and accountability.

How should teams balance AI and human expertise in QA?

Teams should treat AI as an augmentation tool, not an autonomous authority. AI can accelerate repetitive tasks such as test generation and log analysis. However, humans should design strategy, evaluate risk, and approve release readiness. Strategic augmentation outperforms full delegation in AI-assisted QA.