- AI-driven development removes the creation bottleneck and creates a validation bottleneck. QA must evolve from execution-heavy testing to decision-focused quality leadership.

- Running more tests is no longer the hard part. Managing complexity, modeling risk, and defining what is “safe enough to ship” are now the real challenges.

- AI in QA amplifies execution, but human judgment defines consequence. Business impact analysis, ethical responsibility, and risk trade-offs cannot be automated away.

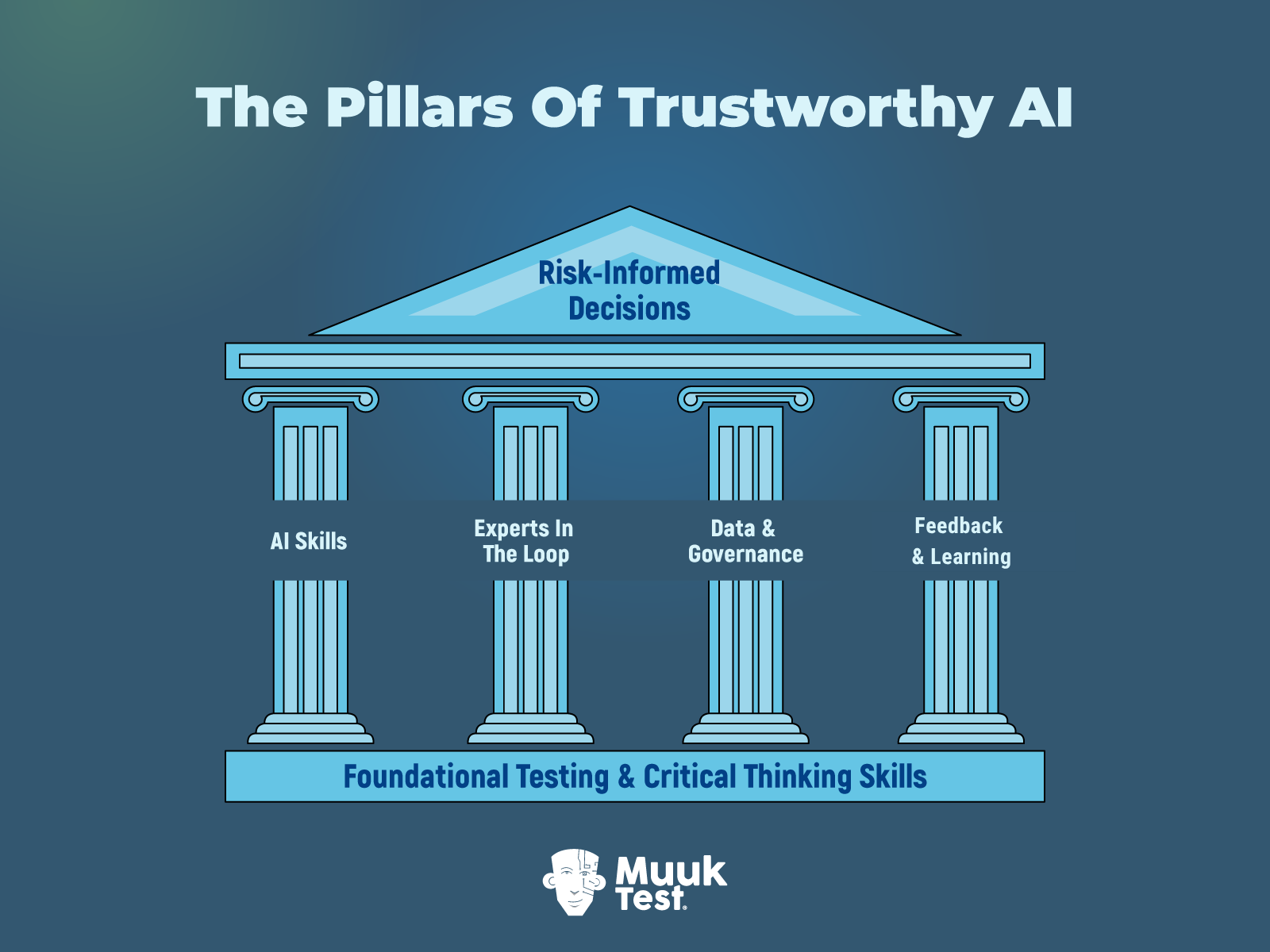

- Trustworthy AI in QA depends on four pillars: AI literacy, expert-in-the-loop accountability, strong data governance, and continuous feedback loops.

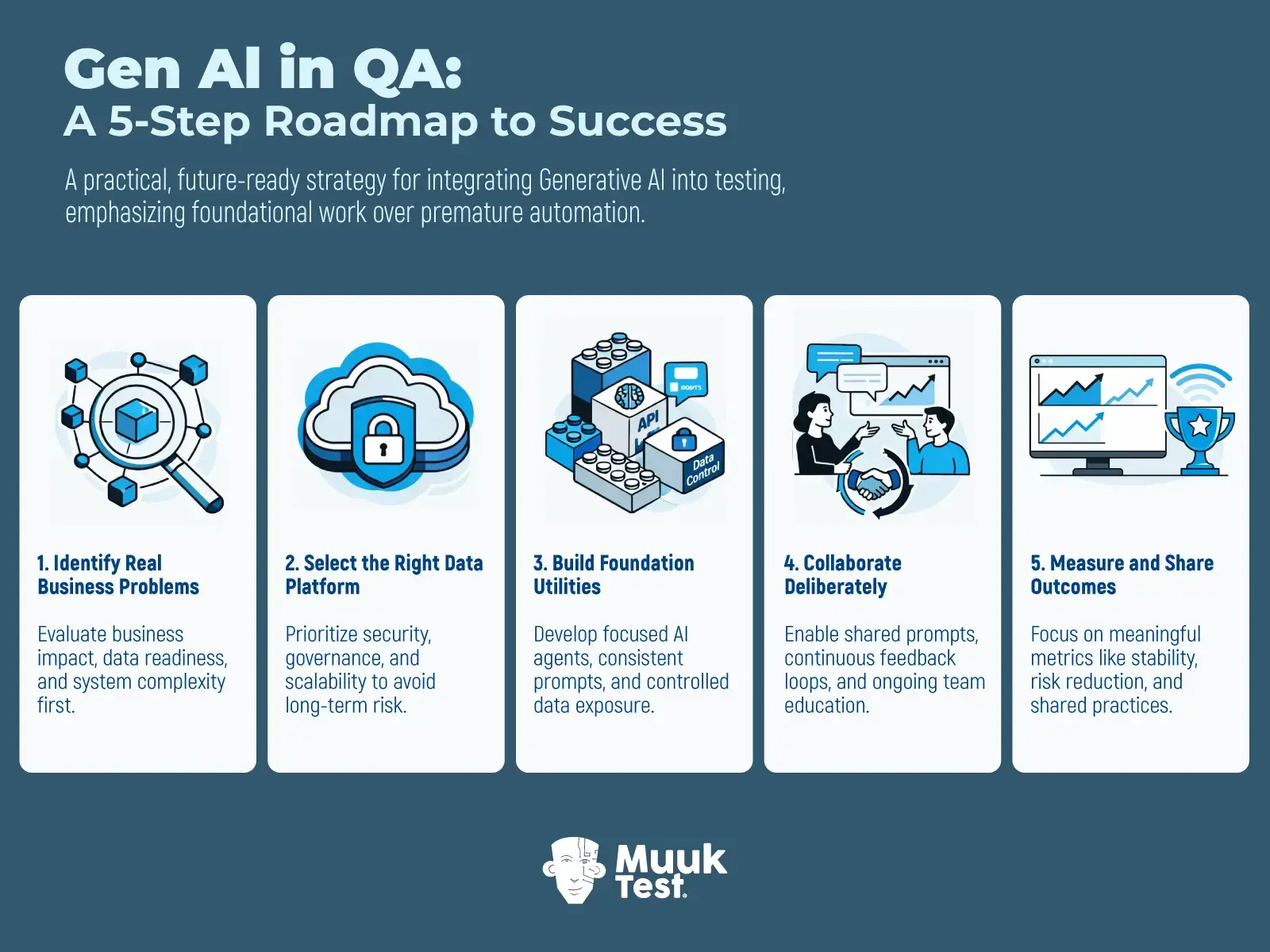

- The 2026 roadmap for AI-assisted QA is about intentional adoption. Identify business problems first, build secure foundations, collaborate deliberately, and measure meaningful outcomes.

This post is part of a 4-part series, Fight Fire with Fire - QA at the Speed of AI-Driven Development:

1. What to Do When QA Can’t Keep Up With AI-Assisted Development

2. The Myth of AI-Only QA: Why Human Oversight Still Matters

3. Agentic QA: Combining AI Agents & Human Expertise for Smarter Testing

4. Rewriting the QA Playbook for an AI-Driven Future ← You're here

Writing code feels effortless now. Too effortless. Code no longer arrives through the traditional cycles. It is generated through AI. Features stack up quickly. Release milestones are hit faster. What used to feel like progress now feels like progress without friction, and motion without friction is exactly what bundles complexity.

Most QA models were not built for this world. They come from a time when change was slower, systems were smaller, and boundaries were clearly visible. That logic collapses when AI reshapes the product faster than anyone can fully comprehend it.

This creates a significant pain point for everyone who works or cares about quality outcomes. Speed is no longer the main problem now. You already have speed. What’s missing is the ability to look at a fast-moving system and say, with conviction, this is safe enough to ship.

The way forward is not more execution. It is a shift in thinking. QA needs to move toward decision-making, risk awareness, and strategic ownership of quality. This article breaks down what is changing, why it matters, and how you can adapt your QA strategy for an AI-driven future.

Emerging Trends Shaping AI in QA

AI in QA sits inside a much larger shift in how software is built, changed, and expanded.

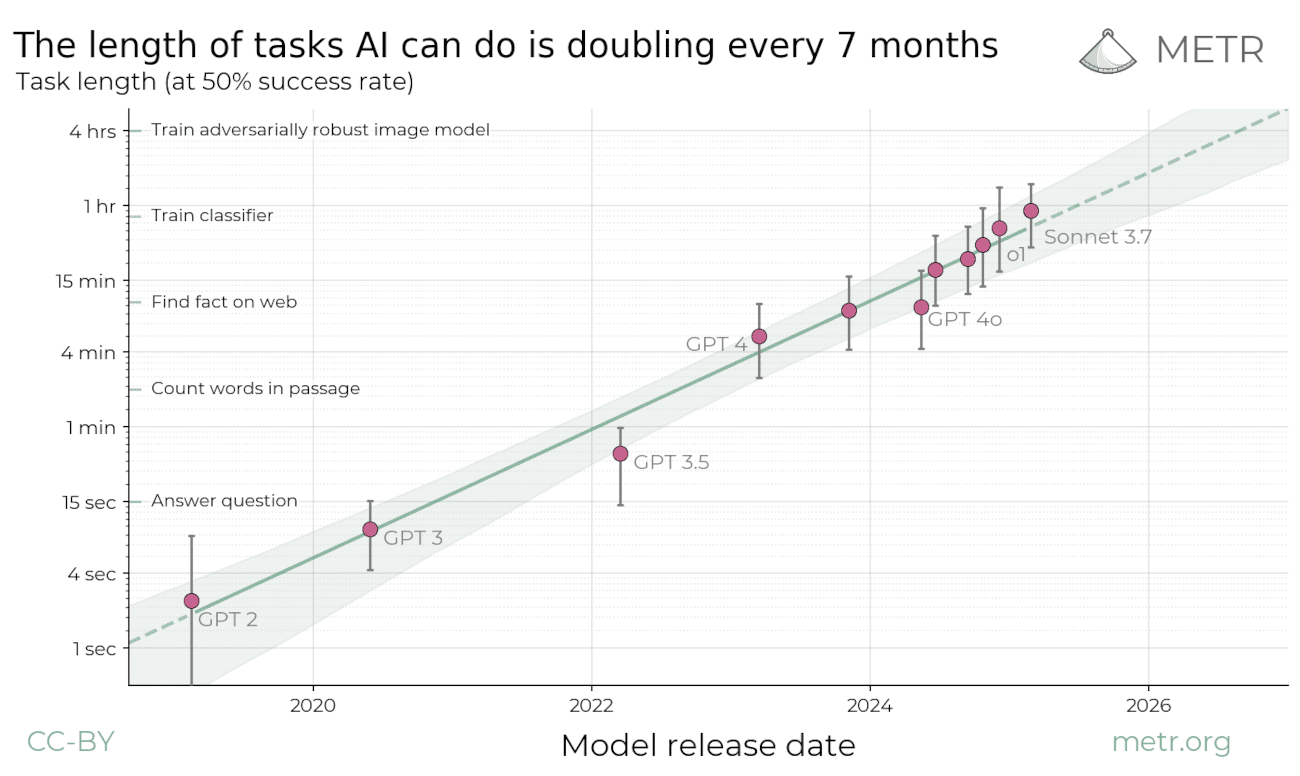

Industry signals point in one direction. The scope of tasks AI can handle is growing fast. Faster than teams can comfortably absorb. Some estimates suggest that capability doubles roughly every seven months.

Teams can now build systems that were previously impractical, fragile, or outright impossible in a limited time frame. More logic. More integrations. More surface area. The ceiling rises, and so does the risk.

This reshapes QA in three fundamental ways.

1. Coding Speed Is No Longer the Constraint. Test generation will accelerate. Environments can now be spun up quickly. Data can be generated on demand. Execution will scale with less friction. Running more checks is no longer the hard part.

2. Complexity Is the New Hidden Tax. AI-driven development allows many things to coexist: features, risks, poorly structured code, open edge cases, and more. Risk spreads quietly and increases with each new release.

3. AI Needs Strategic Guidance and Clarity to Deliver Value. AI can help you build and test better only if:

- You know what you want

- You know what should be done

- You know what matters and what doesn’t

Without clarity, AI will just add to the confusion faster. This is why AI in QA is not just a tooling discussion. It is a thinking shift.

How AI-Driven Development Is Changing QA

To understand QA transformation, you need to understand what has changed across the software delivery process.

Building without AI (or traditional software development) usually follows a familiar pattern.

- Define the product idea

- Conceptualize that via high-level requirements

- Engineer the design and architecture

- Write code to model the design and architecture

- Engineering components and integrating them

- Testing the end product

- Capturing feedback from the field and customers

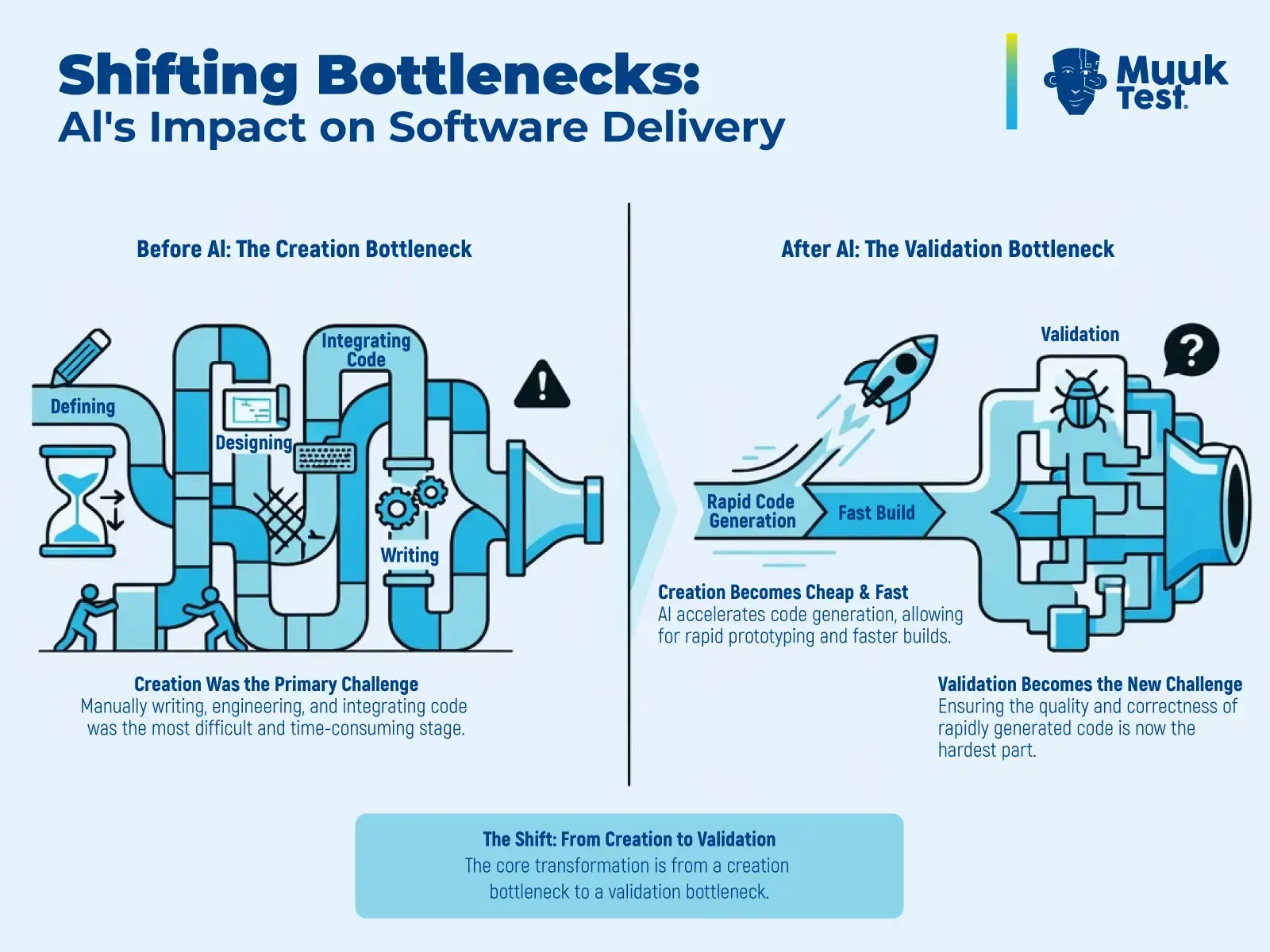

Here, the bottleneck was the creation process.

However, building with AI looks different.

- Engineers move faster as generating code is cheap

- Creating early prototypes and creating builds is faster.

- Creation becomes inexpensive

In short:

- Creation becomes easier.

- Validation becomes harder.

The shift:

- From the creation bottleneck

- To the validation bottleneck

You may already be noticing this. More features reach testing faster. Fewer decisions are questioned early. QA gets involved later with less context and more pressure. This turns QA into a validation bottleneck if nothing changes.

If QA stays execution-focused, it will grow as the biggest bottleneck in your system. However, if QA moves upstream using continuous testing, it will become a quality lever.

Our previous article in this series, on Agentic QA and MuukTest E-A-T approach, already talks about how to fill this gap smartly. Instead of reacting to outputs, QA can help shape what should be built, what risks matter, and what signals deserve attention.

AI in QA and our Agentic QA approach support this shift by reducing execution cost. It frees time for judgment, analysis, and communication. That is where QA experts can add unique value to AI-driven development.

QA Skills AI Cannot Replace

AI can assist with many tasks. But it cannot be a reliable decision maker. Companies have tried experimenting with the agentic decision-making capabilities using AI and have paid high costs of failures. The closer you get to meaning, impact, and consequence, the more human the work becomes. AI cannot replace these skills that an expert QA brings:

Business Context and Impact Awareness

Business focus is not optional. It never was. AI can generate dashboards, logs, and test results all day long, but it cannot decide what failure means in your business context or which risks are worth taking when trade-offs are unavoidable. That responsibility stays with you.

An expert QA understands:

- Business impact

- Test outcomes

- Failure costs

- Support costs and much more

AI is no match for all this. AI can pinpoint issues, but a reliable human expert has to evaluate and analyse the flags carefully.

Risk Modeling, Responsibility, and Ethics

Risk, responsibility, and ethics are essential skills that fall within the same domain. QA experts have to model uncertainty. They have to decide what is acceptable.

An expert QA performs the functions of:

- Risk modeling

- Risk mitigation

- Setting ethical boundaries

- Guarding quality criteria

These are judgment calls, and cannot just be delegated to a stochastic model. Human experts can decide acceptable trade-offs. They own responsibility and ethics.

Deep System Understanding

Good testing is not just about validating things on a surface level. It’s rooted in depth. Strong QA is built on understanding. Knowing how systems behave, how failures propagate, and why certain tests exist at all. Without this core understanding, AI-generated outputs risk becoming noise rather than meaningful insights.

Understanding in depth involves:

- Mastering first principles thinking

- Mastering logic and thinking

- Mastering the fundamentals of testing

- Asking why before how

Critical Thinking and Signal Evaluation

AI generates valuable output as well as noise at scale. Critical thinking is your filter against the AI noise. Good evaluation helps you keep track of what has changed and why. You know the pulse of your system.

An expert QA actively:

- Filters AI noise

- Tracks meaningful change

- Validates against the latest context

Human Skills That Compound Strategic Value

AI has not just flattened all skills. Some skills have been compounded because of AI. They are the human skills.

As systems accelerate, these human skills have become highly valuable:

- Communication

- Persuasion

- Questioning

- Storytelling

Communication turns risk into something people can actually understand. Persuasion influences the decisions and prevents damage. Questioning exposes costly assumptions. Storytelling connects actions to consequences, especially for leaders who aren’t part of the day-to-day execution details.

These skills amplify everything else you do. Without them, even a correct analysis doesn’t get its value.

There is also a resurgence of testing skills that cannot be rushed. These are skills like Usability, Accessibility, UX, etc. These demand empathy, context, and patience. AI can help here, but only as support.

Strategic Pillars for Trustworthy AI in QA

To adapt sustainably, QA needs strong foundations. These foundations can be described as strategic pillars that support better decisions. These pillars combine human judgment, technical capability, and organizational discipline into a system that can carry responsibility.

AI Skills as Operational Leverage

AI skills provide operational leverage. It helps you understand what tools can and cannot do. Developing these skills prevents blind reliance on AI. Used correctly, they turn AI into a capable assistant.

Key capabilities include:

- AI literacy

- Prompt engineering

- Context engineering

- Tool orchestration

- Model evaluation

Here is a detailed guide on the skills required for an AI-assisted team, with learning resources and tips.

Expert-in-the-Loop as Decision Owners

Good AI systems revolve around expert humans. Removing humans removes responsibility. They are not just supervising AI. They are accountable for what it produces.

Humans act as:

- Goal setters

- Risk explorers

- Sense-makers

- Ethical owners

- Accountable decision-makers

This is a crucial part of responsible AI.

Data and Governance as Speed Enablers

Strong governance helps QA move faster by preventing confusion. Clear ownership, access control, and quality standards allow AI systems to operate safely and predictably.

Why it matters:

- Data integrity enables speed

- Poor data creates rework and mistrust

What it supports:

- Reliable AI assistance

- Confident decisions

- Reduced hidden risk

Feedback Loops as Competitive Advantage

AI systems can mimic understanding. They do not truly learn from feedback the way humans do. Humans who cannot reflect and adapt gain no advantage over AI tools.

Your ability to identify improvements, seek feedback, and apply what you learn is your edge. AI can help you move faster once you know what to improve.

These pillars work together. Weakness in any pillar distorts decisions. Even skilled humans and powerful tools fail without judgment and structure. Trustworthy AI emerges when all of them hold.

2026 Roadmap for AI-assisted QA

A future-ready QA strategy needs more than enthusiasm. It needs strategic steps and consistency. This is a practical roadmap you can adapt to your context to reshape QA in 2026 and beyond. This roadmap is designed to keep you in control of your quality and judgment.

Step 1: Identify Real Business Problems

Do not apply tools to the first possibility that you see on the internet. Begin by understanding what actually hurts the business. Where failures cost money. Where delays erode trust. Where complexity is already straining the system.

Before choosing any AI tool, get clear on three things:

- Business impact

- Data readiness

- System complexity

We all work on novel projects with novel constraints and novel goals. This step aligns you with your novelty and context. Skipping this step will render downstream efforts ineffective.

Step 2: Select the Right Data Platform

Select data platforms with care. Strong platforms create options and possibilities in the long run. Weak platforms do the opposite. For enterprise-level work, security, governance, and scalability are essential. They are primary requirements.

Weak foundations create long-term risks and make your outcomes untrustworthy. Remember, if you cannot trust someone with your data, you cannot trust them with their AI capabilities either.

Step 3: Build Foundation Utilities

Advanced agents are appealing but risky. Resist the temptation to use them directly. Start with foundation utilities. Create consistent procedures through prompts. Build narrow, well-scoped agents that reliably solve specific problems. Control data exposure, especially when external systems are involved.

You can set up things like:

- Prompting collection (or hub)

- Reference testing agents (to work and extend)

- Micro utilities for testing (that leverage AI tools)

Focus on precision and deterministic results over the breadth of applying AI to every stage.

Step 4: Collaborate Deliberately

Collaboration matters. Gen AI work fails quietly in silos. Share prompts. Share agents. Test internally. Create feedback loops that actually close. Treat learning as a continuous activity, not a rollout phase.

When you collaborate on Gen AI with your team, you foster:

- Shared prompts and agents

- Continuous feedback loops

- Internal testing and learning

- Ongoing team education and AI skills

Tools will continue to evolve with time, but this step will evolve your team.

Step 5: Measure and Share Outcomes

With an evolving tech like Gen AI, measuring activities is a waste of time. Activities evolve as tech evolves. No two activities are directly the same. That’s why it's essential to measure outcomes rather than activities. Shift your focus toward metrics that reflect stability, reduced risk, and real learning. Share what works. Retire what doesn’t.

Shift focus to:

- Meaningful metrics

- Shared practices

- Responsible democratization of Gen AI

This roadmap is not about moving fast. It’s about moving intentionally.

For deeper context on how teams struggle when QA remains execution-focused, this article on scaling pitfalls is worth reviewing. You can also find guidance on aligning QA efforts with meaningful metrics here.

The Future of QA in an AI-Driven World

The future of software testing is not about replacing humans with AI. AI does not remove humans from testing. It changes where human attention belongs. What matters going forward is not how much work gets executed, but how much thinking gets applied at the right moment.

AI-driven development pushes two things: speed and complexity. QA transformation is what allows teams to absorb both without losing control or credibility. When QA moves away from execution-heavy testing and toward decision-driven quality leadership, risk surfaces earlier. Fewer surprises come in production. Progress accelerates without eroding trust.

That is where AI in QA delivers its real value. Not by making teams faster alone, but by allowing speed and confidence to coexist. QA leaders must embrace this transformation not as a challenge, but as an opportunity to redefine their strategic role in the AI era.

Frequently Asked Questions

What is AI in QA?

AI in QA refers to the use of artificial intelligence to assist software testing activities such as test generation, defect detection, risk identification, and regression automation. However, AI in QA is not just about tooling. It requires strategic oversight, governance, and human decision-making to ensure speed does not replace accountability.

How is AI-driven development changing software testing?

AI-driven development removes the creation bottleneck by making code generation faster and cheaper. As a result, testing becomes the new bottleneck. QA must shift from execution-heavy testing to validation, risk modeling, and decision leadership to keep up with accelerating release cycles.

What skills will QA professionals need in an AI-driven future?

In an AI-driven future, QA professionals need strong business context awareness, risk modeling capability, ethical judgment, critical thinking, and communication skills. While AI can assist with execution, it cannot replace human responsibility for defining acceptable risk and release readiness.

Why is governance important in AI-assisted QA?

Governance ensures that AI tools operate within clear boundaries for data, security, and accountability. Without governance, AI outputs can amplify risk, introduce hidden complexity, and create false confidence. Strong governance enables speed by preventing confusion and reducing rework.

What is the validation bottleneck in AI-driven teams?

The validation bottleneck occurs when development accelerates with AI, while testing and decision-making processes do not keep pace. Features are created quickly, but evaluating risk, complexity, and business impact becomes harder, making QA the new system constraint.

What does a future-ready AI testing strategy look like?

A future-ready AI testing strategy focuses on business impact first, builds secure data foundations, develops AI literacy within teams, keeps experts in the loop for decision ownership, and measures meaningful quality outcomes instead of activity metrics.